I guess Sharp/Dynabook must’ve liked my coverage of their Portege X40-M2 unit. Why say so? Because about 2 days after I sent that unit back, they sent me another more powerful laptop to look at. Today’s blog post describes my Dynabook Tecra A60-M intake experience (Model PNL21U-017004). It’s a bigger beast, but a little less sturdy (it’s got what feels like an all-plastic lower/keyboard deck) albeit with minimal flex. For the first time, ever, it comes with Windows 11 24H2 Enterprise loaded as well.

Describing Dynabook Tecra A60-M2 Intake Process

Again and suprisingly, Dynabook uses closed-cell plastic foam inserts to enshroud the unit in an otherwise all-cardboard set of nested shipping boxes. It comes with exactly two parts: the laptop itself and the power brick/power cord. Initial setup was absurdly easy. But, for some odd reason, Intel BE201 802.11 Wi-Fi 7 adapters won’t let me log into the 5GHz band on my Asus router. I have to use the 2.4 GHz band instead. If I need to go faster than that, I can plug my trusty StarTech GbE USB 3 adapter into one of its two 5 Gbps USB 3.2 version 1 ports.

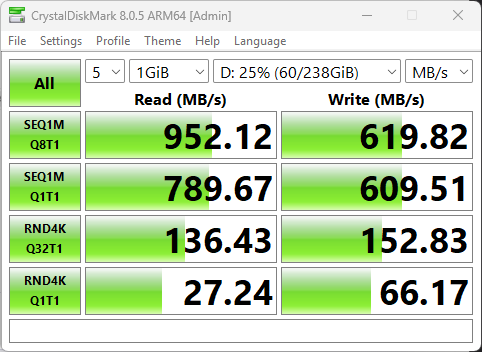

It took me some time to get all the bits and pieces in place for my usual setup. I used Patch My PC Home Updater to bring in 7Zip, GadgetPack, CystalDisk mark & info, CPUID, Everything, Chrome, and more. Because this is an Intel-flavored Copilot+ PC, I also installed Intel Driver and Support Assistant as well, along with the Dynabook Support Utility to check for vendor UEFI, firmware, and driver updates.

A Clean, Clean, Clean Machine

I’ve got to say this is one of the cleanest review units I’ve ever gotten. It required very little by way of update or clean-up to bring entirely up to snuff. It’s also got the fastest and most accurate fingerprint scanner I’ve ever used (Device Manager identifies it as a FocalTech Electronics device). So far, it’s fast, has a nice 16″ display, and does everything I’ve asked it to in short order.

The Tecra A60-M2 Components, Listed

According to the vendor web page, this unit goes for US$1249 (MSRP). I don’t see any major discounts available online but it’s pretty new still, so they may be coming. Here’s what’s inside:

- CPU: Intel Core Ultra 5 225U

- OS: Windows 11 24H2 Enterprise (26100.4946)

- 16.0″ WUXGA display (1920×1200)

- 16 GB DDR5-5600 (Samsung)

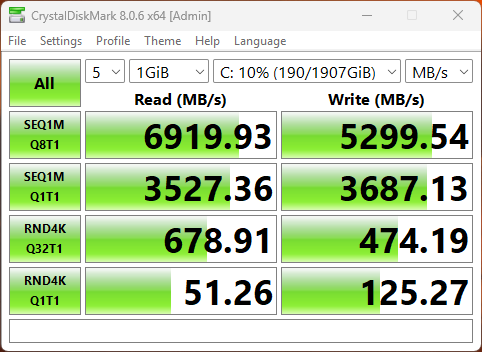

- 0.5TiB Samsung OEM PCIe Gen4 NVMe SSD

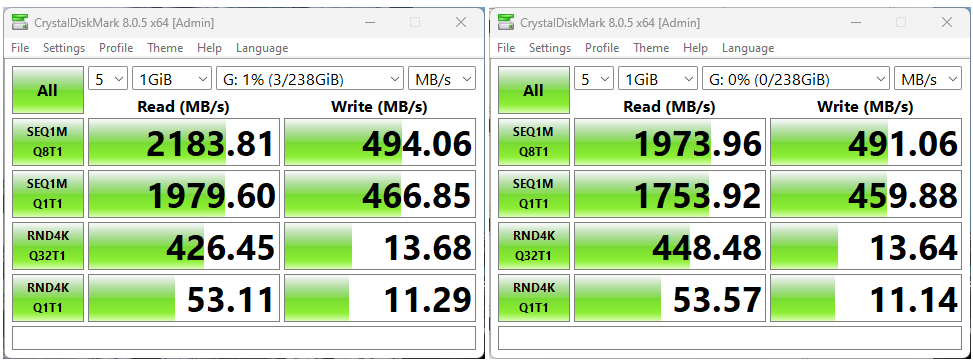

- Ports: 2xUSB4/TB4 USB-C ports, 2xUSB3.2 Gen 1 ports, HDMI, RJ-45 GbE, microSD, mini-RCA (headset) jack

- 60 Wh Lithium polymer battery; 65W USB-C power brick

What it doesn’t have that I might want? Offhand, I’d say a Hello-capable IR camera, and a touch display. Other than those things, and a bigger SSD, it’s pretty well-equipped. What one gets for US1,250 for this unit isn’t at all bad.

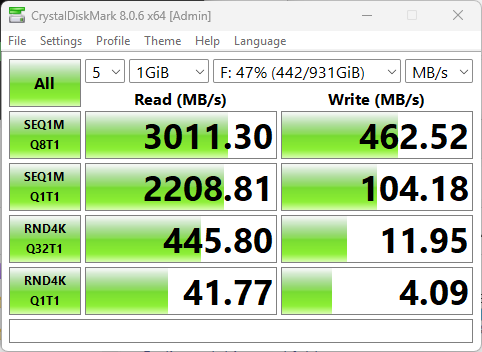

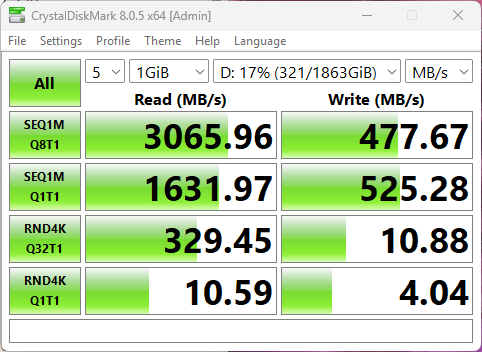

All in all, I like it pretty well so far. I’ll report further as I spend a bit more time with it, and learn more about what it can and can’t do. I’m curious about its SSD speed, USB-C performance, and general processing oomph. Expect to hear more from me on all of those topics, soon. In the meantime, I’m having fun playing with this new toy.